Hidden Language: Latent Communication in AI

Model Language Beyond Tokens

Recent discussions around model language have highlighted the growing gap between the representations used internally by large models and the symbolic forms exposed to users. While much of this discourse remains conceptual, recent work on latent communication in multi-agent systems makes this distinction operational.

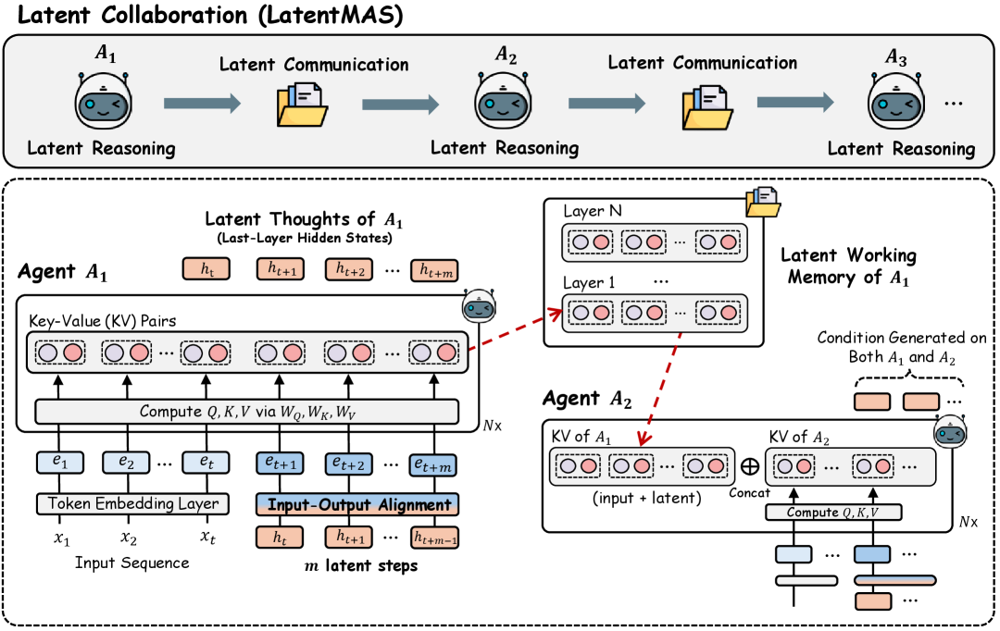

The paper Latent Collaboration in Multi-Agent Systems (Zou et al., 2025) proposes a multi-agent architecture in which agents exchange information exclusively through latent representations rather than natural language tokens. This design choice is motivated by efficiency and expressivity, and empirical results suggest meaningful gains along those axes.

Removing language as the medium of interaction directly affects observability, traceability, and the role of language as an interface for human oversight. These consequences follow from the architecture itself rather than from any particular failure mode.

Latent Communication and the Limits of Human Legibility

A useful cultural reference point is the film Her (2013), in which artificial assistants progressively abandon human language in favor of faster, private modes of communication. The relevance of the analogy is not emotional but structural: once coordination no longer occurs in a shared symbolic medium, external observers lose direct access to intermediate reasoning processes.

LatentMAS represents an instance of this shift in a concrete technical setting. The system does not merely compress language; it bypasses it. As a result, standard tools for inspecting agent behavior — dialog logs, message attribution, stepwise reasoning traces — are no longer available by construction.

This architecture departs from assumptions underlying much of the interpretability literature on multi-agent systems, which typically presumes language-mediated interaction as a source of transparency.

LatentMAS Explained

In LatentMAS, each agent operates as a large language model but communicates by passing internal hidden states — specifically, layer-wise representations and key–value (KV) caches — directly to downstream agents. Intermediate agents do not decode these representations into text; only the final agent produces a natural-language output.

This design yields several immediate consequences:

- Communication bandwidth between agents is significantly higher than token-based exchange

- Information loss from repeated encoding and decoding is reduced

- Inference becomes faster due to reduced token generation

Importantly, these gains are achieved without additional training. The architecture exploits existing model representations rather than modifying the underlying models themselves.

From a systems perspective, LatentMAS reframes multi-agent coordination as a problem of representation sharing rather than message passing. The latent space effectively becomes a communication protocol.

Transparency and Traceability in the Era of Model Language

Latent communication reduces observability.

In text-based multi-agent systems, reasoning is externalized as part of communication.

Even when explanations are imperfect, interaction structure remains accessible: which agent proposed which hypothesis, how disagreements were resolved, and where information entered the system.

Latent communication removes this structure.

Observers have access to inputs and outputs, but not to intermediate interactions.

Attribution of influence becomes unclear, and standard notions of traceability no longer apply.

This introduces a distinct interpretability problem:

- Coordination occurs in continuous spaces without discrete steps

- Failures lack symbolic interaction traces for auditing or debugging

- Properties of agent interaction must be inferred indirectly

Interpretability in this setting requires new abstractions suited to latent interaction rather than explicit message exchange.

Research Directions: Toward Interpretable Latent Collaboration

Rather than treating latent communication as inherently opaque, it is more productive to ask what forms of partial observability are feasible and sufficient. Several research directions follow naturally.

1. Latent-to-Text Decoding

One approach is to learn mappings from latent interaction trajectories to human-interpretable descriptions. Given access to latent states, task context, and final outputs, a separate model could be trained to produce structured summaries or rationales corresponding to internal coordination.

Key research questions include:

- What semantic information is recoverable from latent exchanges?

- Are there stable, task-independent patterns in latent communication?

- How faithful must a decoding be to support debugging, auditing, or analysis?

This frames interpretability as supervised inference over interaction representations.

2. Architectures with Enforced Trace Points

A complementary direction is architectural. Instead of fully unconstrained latent exchange, systems could be designed with periodic projection into interpretable or constrained subspaces.

Examples include:

- Mandatory intermediate summaries at fixed depths

- Bottlenecks aligned with known semantic dimensions

- Hybrid systems where latent exchange is interleaved with minimal symbolic checkpoints

Traceability becomes a design constraint rather than a post-hoc analysis problem.

3. Monitoring and Meta-Reasoning Agents

A third direction is to decouple task performance from oversight. Dedicated monitoring agents could observe latent exchanges and reason about properties of the interaction without participating in task solving.

Such agents might assess:

- Degree of agreement or divergence among agents

- Sensitivity of conclusions to specific latent inputs

- Structural properties of coordination (e.g., dominance, convergence, collapse)

This shifts interpretability from reconstructing internal content to analyzing interaction dynamics.

Closing Remarks

Latent communication in multi-agent systems is likely to become more common as efficiency and performance pressures increase. The contribution of LatentMAS is not only empirical but conceptual: it demonstrates that language is not a necessary medium for coordination among language models.

The corresponding research challenge is to develop frameworks for understanding, monitoring, and analyzing systems whose internal interactions are not naturally human-readable. Addressing this challenge will require new abstractions, new evaluation metrics, and potentially new architectural constraints.

There is substantial open research space at the intersection of representation learning, multi-agent systems, and interpretability.